Don’t deploy Large Language Models (LLMs) or NMT engines based on marketing claims. We provide objective, data-driven Automated Metrics and MQM-based human benchmarks to validate engine performance, optimize custom training, and mitigate linguistic risk.

Actionable quality intelligence for machine translation builders and users

Model Training and Fine-Tuning

Quality feedback loops for data scientists training models. We provide the error typology, training datasets and analytics to improve model behavior.

Implement AI in Localization Teams

Determine which SOTA models (LLM or NMT) and workflows fit best your languages and content types.

For Procurement: RFP & Vendor Benchmarking

Objective, third-party validation during RFPs. Compare machine translation providers on language quality and performance SLA metrics.

Continuous LQA Monitoring

Solve systemic issues like Japanese honorifics or Baltic grammar. Consistent monitoring ensures your user experience remains high-standard in every locale.

A one-size-fits-all approach does not work for enterprise machine translation. Different project phases demand different assessment depths. We designed our methodology to provide exactly the right level of rigor based on your data volume, budget, and linguistic risk. Whether you need rapid automated benchmarking for million-word NMT datasets or deep human annotation for highly regulated LLM outputs, our tiered framework delivers precise intelligence.

| Tier | Methodology & Tools | Metrics | Volume & Sampling |

|---|---|---|---|

| Automated | Reference-based via Best Engine Console | BLEU, WER, TER | 1,000 – 10,000 sentences |

| AI-Assisted | Reference-free LLM-as-a-Judge (GPT, Grok, Claude) | Quality Estimation (QE) | 400 – 1,000 sentences |

| Rapid Human | ABC Testing & Contrastive Error Span Annotation | Fast Error Spans | 300 – 400 segments (Representative sample) |

| Expert Human | Detailed Human Linguistic Audit | MQM Error Classification | 300 – 400 segments (Representative sample) |

| Technical | API Capacity Testing via Promptsit Bench | P95, P99, Throughput, Latency | API Stress Test |

Achieving “Gold Standard” GenAI translation evaluation goes far beyond relying solely on just bilingual reviewers. We combine professional tooling, statistical validation, and optimized human workflows to deliver unassailable metrics without breaking your localization budget.

Advanced Annotation

We utilize Label Studio for professional-grade linguistic annotation, ensuring every error is classified by severity and category.

Statistical Verification

Results are validated using Statistical Significance Checks and Inter-Annotator Agreement (IAA) (Krippendorff’s alpha or Cohen’s kappa) to eliminate subjectivity.

Economic Optimization

Our proprietary 2-Linguist + 1-Arbitrator workflow is smarter. Two linguists review the dataset, and our Senior Arbitrator only steps in to resolve disputed segments. This delivers total IAA while reducing your human evaluation costs by 20%.

Challenge Datasets

We curate high-difficulty datasets to stress-test engines on complex grammar, terminology, and cultural nuances.

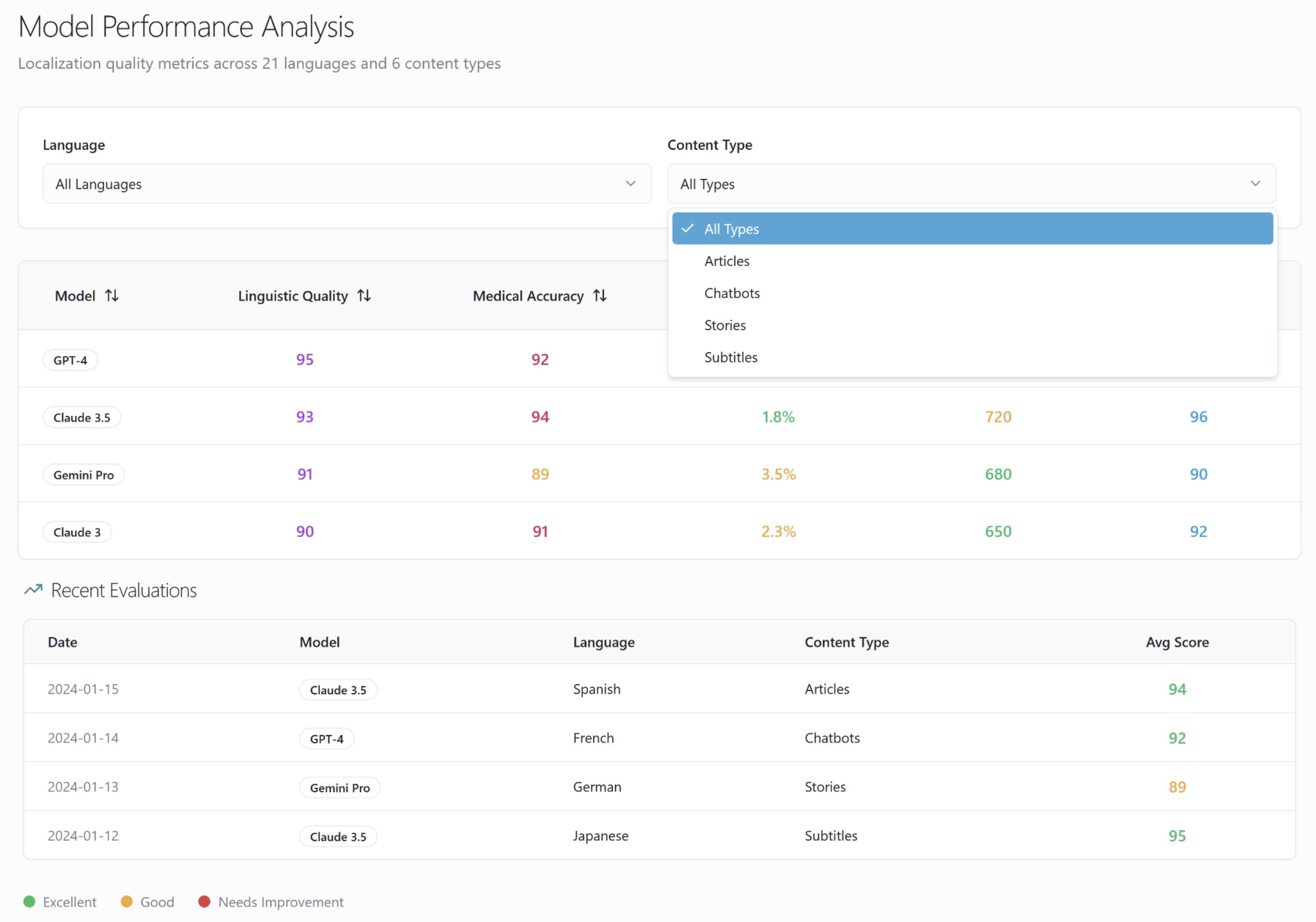

Evaluating AI translation models requires more than raw data. Our enterprise benchmark reports transform complex linguistic annotations into clear visual dashboards comparing industry leaders like GPT-4o, Claude 3, DeepL, and Google NMT. We give localization teams the exact machine translation metrics needed to make confident deployment decisions.

Benchmarking and deploying enterprise-grade MT AI demands both high-performing APIs and pristine linguistic data. We provide the technical backbone for both.

Custom Model Fine-Tuning for IT Teams

Technical feedback loops for engineers training high-volume models. We provide the precise error typology required to retrain and improve model behavior.

Localization Data Integration

We process TMs and Glossaries via a custom data pipe, eliminating repetitions and cleaning datasets to ensure evaluation is based on high-signal content.

Navigating AI translation requires understanding the underlying data. Here is a breakdown of the industry-standard metrics we use to benchmark Neural Machine Translation (NMT) and Large Language Models (LLMs), from automated n-gram scoring to deep human linguistic analysis.

| Metric & Acronym | Scientific Description | Best Localization Enterprise Use Case |

|---|---|---|

| BLEU (Bilingual Evaluation Understudy) | The foundational automated metric. It evaluates translation quality by calculating the n-gram overlap (lexical similarity) between the machine output and a human 'gold standard' reference translation. | High-Volume Benchmarking: Ideal for rapidly testing base engine performance across massive datasets (10,000+ segments) when human references already exist. |

| TER (Translation Edit Rate) | A distance metric that calculates the exact number of edits (insertions, deletions, substitutions, and shifts) required to transform machine output into an acceptable reference translation. | MTPE Cost Estimation: The industry standard for predicting Post-Editing (MTPE) effort, setting vendor pricing, and estimating project turnaround times. |

| WER (Word Error Rate) | Similar to TER but strictly evaluates discrepancies at the word level (substitutions, deletions, insertions) without accounting for word 'shifts' or semantic reordering. | Terminology & Speech AI: Best used for assessing strict glossary adherence and evaluating ASR (Automatic Speech Recognition) integrated MT workflows. |

| QE (Quality Estimation) | Reference-free evaluation. Uses supervised AI or State-of-the-Art LLMs (LLM-as-a-judge) to predict the quality of a translation based solely on the source text and MT output—no human reference required. | Agile GenAI Deployment: Perfect for real-time routing (flagging poor MT segments for human review instantly) and evaluating dynamic LLM outputs on the fly. |

| ESA (Error Span Annotation) | A human-in-the-loop methodology where evaluators do not grade the whole sentence, but explicitly highlight the exact 'spans' (chunks of text) containing the localized error. | Custom Model Training: Provides NLP engineers with pinpoint, targeted data to fix highly specific recurring hallucinations in custom NMTs. |

| Contrastive ESA (Contrastive Error Span Annotation) | [WMT 2024 Standard] Human evaluators compare two different MT outputs side-by-side, explicitly highlighting and classifying relative error spans to definitively prove which engine produced the superior output. | RFP Engine A/B Testing: The ultimate tool for vendor selection. Use this to prove exactly why Engine A beats Engine B in head-to-head enterprise procurement audits. |

| MQM / DQF (Multidimensional Quality Metrics / Dynamic Quality Framework) | The industry's most rigorous framework for analytic human evaluation. Errors are systematically classified by specific typologies (e.g., Terminology, Syntax) and weighted by severity (e.g., Minor, Major, Catastrophic). | High-Risk Quality Governance: Mandatory for linguistic audits of highly regulated, medical, or legal content where safety and total compliance are non-negotiable. |

We use Label Studio, a professional-grade data labeling tool, to conduct our human evaluations. This allows our domain-expert linguists to perform highly precise Multidimensional Quality Metrics (MQM) error annotation and classify translation errors by severity. Read more in our blog: https://custom.mt/tools-for-data-labelling-in-machine-translation-evaluations/.

Translation quality isn’t just about linguistics; it’s also about speed. We use Promptsit Bench to conduct rigorous technical evaluations, measuring P95 and P99 latency, API throughput, and system stability under high-volume production loads.

Traditional automated metrics (like BLEU, WER, and TER) compare MT output against a human reference translation. We handle this at scale (up to 10,000+ segments) via the Best Engine in Custom.MT Console. LLM-as-a-Judge, on the other hand, uses State-of-the-Art models (like GPT-4o or Claude) for reference-free Quality Estimation (QE), analyzing 400-1,000 segments for fluency and accuracy without needing a pre-existing human translation.

Subjectivity is a major risk in localization. We eliminate it by calculating Inter-Annotator Agreement (IAA) using Krippendorff’s alpha or Cohen’s kappa. Furthermore, our unique 2-Linguist + 1-Arbitrator workflow ensures consensus on error severity while reducing overall evaluation costs by 20%.

MQM (Multidimensional Quality Metrics) is a standardized framework for assessing translation quality. Instead of a simple “pass/fail,” our linguists use MQM to tag specific errors (e.g., terminology, syntax, omission) and weight them by severity—ranging from minor stylistic issues to catastrophic medical or safety errors.

Yes. We frequently benchmark prompt-engineered LLMs (using style guides and glossaries) against established Neural Machine Translation (NMT) engines like DeepL, Google AutoML, and ModernMT to see which technology handles specific content types better.

It depends on the methodology. For automated reference-based scoring (BLEU/WER), we recommend 1,000 to 10,000 segments. For detailed human MQM audits or LLM-as-a-judge Quality Estimation, a highly curated representative sample of 300 to 400 segments is sufficient to achieve statistical significance.

Partner with Custom.MT to build a professional evaluation program that justifies your MT AI investments and protects your brand’s global reputation.